Camera frame rate describes how many images a camera can acquire per second, and it is often treated as a headline specification when evaluating high-speed imaging systems. In dynamic experiments, inspection workflows, or fast biological processes, frame rate directly determines how much temporal detail can be captured.

However, the specified maximum frame rate is not a fixed value. It depends on sensor architecture, region of interest (ROI), exposure time, readout mode, and data interface bandwidth. In practice, the achievable frame rate is the result of multiple interacting factors. Understanding these factors requires looking beyond frames per second and examining how frame time is constructed inside the camera system.

What Is Camera Frame Rate?

Camera frame rate refers to the number of frames a camera can acquire per second under a defined set of operating conditions. It is typically expressed in frames per second (FPS) and represents how quickly successive images can be captured and made available for processing or storage.

Frame rate determines the temporal resolution of an imaging system. In dynamic applications—such as particle tracking, high-speed inspection, or rapidly changing biological processes—higher frame rates allow more detailed observation of motion and transient events.

However, frame rate is not an isolated specification. The maximum achievable FPS depends on camera mode, region of interest (ROI), exposure time, bit depth, and interface bandwidth. A quoted “maximum frame rate” usually assumes specific conditions, such as reduced ROI or a particular readout mode.

Understanding what truly limits frame rate requires examining how long it takes to acquire and read out a single frame—known as the frame time—which is explored in the next section.

Frame Rate vs Frame Time vs Line Time

Frame rate is commonly expressed in frames per second (FPS), but FPS is not a primary physical parameter. It is the inverse of the time required to acquire and read out a single frame.

Frame rate = 1 / Frame time

To understand what determines frame rate, we must therefore examine how frame time is constructed.

What Makes Up Frame Time?

Frame time represents the total time required to produce one complete image. In most CMOS cameras, this includes:

● Exposure time (how long the sensor collects light)

● Sensor readout time (how long it takes to convert and transfer pixel values)

● Data transfer time (interface transmission to the host computer)

When exposure time is short relative to readout time, frame rate is typically limited by the readout process. When exposure time is long, it may instead become the dominant limiting factor.

Line Time — The Fundamental Sensor Constraint

For CMOS sensors, the primary internal factor limiting frame rate is the line time. Line time is the time required for a row of analog-to-digital converters (ADCs) to measure and digitize one row of pixels.

In most architectures, each row is processed sequentially. As a result, the total readout time for a frame is determined by the number of active rows multiplied by the line time:

Frame read time = Line time × Number of rows

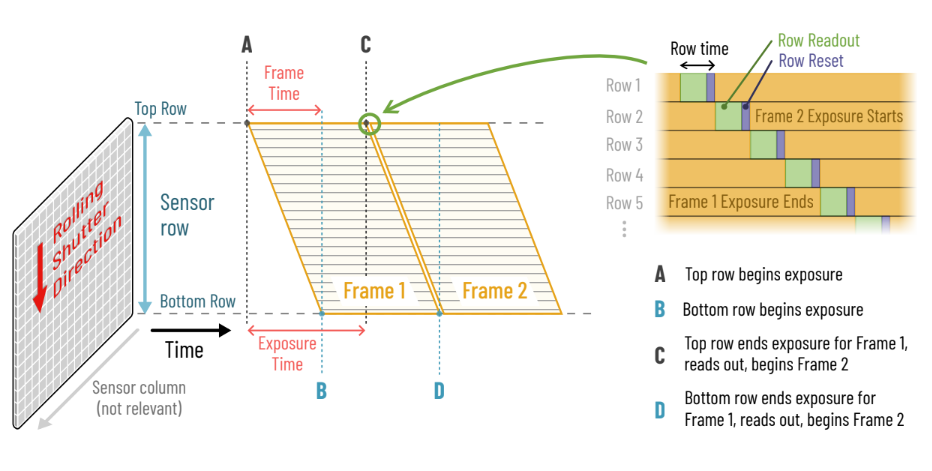

Figure 1: Introduction to 'Parallelogram' rolling shutter timing diagrams

Left: Plot of sensor row (y axis) versus time (x axis), with yellow parallelograms marking the exposure of each camera row due to the action of the rolling shutter.

Right: A zoom in to the individual row level, illustrating the role readout and reset play in determining rolling shutter line time.

This explains why reducing the region of interest (ROI)—specifically the number of pixel rows—can significantly increase frame rate. Halving the number of rows approximately halves the readout time and can nearly double the achievable FPS, assuming other factors remain constant.

Line time itself may vary between readout modes, but within a given mode it is typically fixed.

Theoretical vs Real-World Frame Rate

The “maximum frame rate” quoted in specifications is usually calculated from the frame read time alone. In practice, real-world frame rate may be lower due to:

● Longer exposure times

● Longer exposure times

● Interface bandwidth limitations

● Software or processing delays

For this reason, it is important to distinguish between theoretical maximum FPS and the achievable frame rate under your actual operating conditions.

Sensor-Level Factors That Affect Frame Rate

While line time and frame read time define the fundamental timing limits of a sensor, several configurable parameters at the camera level can significantly influence achievable frame rate.

Region of Interest (ROI)

The number of active pixel rows directly determines frame read time. Reducing the height of the region of interest decreases the number of rows that must be read, thereby shortening the readout duration.

Because frame read time scales approximately with the number of rows, halving the ROI height can nearly double the maximum achievable frame rate—assuming exposure time and interface bandwidth are not limiting factors. For applications focused on a small area of motion or detection, ROI is often the most effective way to increase speed.

Binning and Subsampling

Pixel binning combines adjacent pixels before readout or digitization, effectively reducing the output resolution and total data volume. Depending on the sensor architecture, binning may reduce data throughput requirements and sometimes improve effective frame rate.

However, binning does not always reduce the internal line time. In many CMOS designs, rows are still read sequentially even when pixels are combined. As a result, binning may improve data transfer efficiency without significantly altering the intrinsic readout timing.

Bit Depth and Readout Modes

Many scientific cameras offer multiple readout modes, often trading dynamic range for speed. For example, a 16-bit high dynamic range mode may prioritize low read noise and large full-well capacity, while a 12-bit “speed mode” may achieve higher frame rates by reducing data precision or altering amplification settings.

Since higher bit depth increases the amount of data per frame, switching to a lower bit depth can reduce data transfer load and, in some cases, allow higher frame rates—particularly when interface bandwidth is a limiting factor.

Exposure Time and Frame Rate Interaction

Frame rate is not determined by sensor readout time alone. Exposure duration can also limit how quickly successive frames can be acquired.

In general, the maximum achievable frame rate is governed by whichever time component is longer: exposure time or frame read time. If exposure time is shorter than the readout time, then readout limits the frame rate. However, if exposure time exceeds the readout duration, exposure becomes the dominant constraint.

In many rolling-shutter CMOS designs, exposure and readout can partially overlap. While one row is being read out, other rows may already be integrating light for the next frame. This overlap allows exposure time to be shorter than the full frame read time without necessarily reducing frame rate.

However, when exposure time becomes longer than the sensor’s total readout time—such as in low-light imaging requiring longer integration—the frame rate decreases proportionally. In such cases:

Maximum frame rate ≈ 1 / Exposure time

Understanding whether your system is readout-limited or exposure-limited is essential when optimizing acquisition speed. Increasing gain, improving illumination, or reducing required integration time may be more effective for raising frame rate than adjusting ROI or readout mode alone.

Interface Bandwidth and Data Throughput Limitations

Even if a sensor can read out frames at high speed, the interface between the camera and the host computer can become the limiting factor.

Each acquired frame must be transferred through a data link—such as USB, Camera Link, or PCIe—to the computer for processing or storage. The required bandwidth depends on:

● Frame size (number of pixels)

● Bit depth (data per pixel)

● Frame rate

Data rate can be estimated as:

Data rate ≈ (Pixels per frame × Bit depth × Frame rate)

For example, a 2048 × 2048 sensor operating at 16-bit depth and 100 FPS generates over 800 MB/s of raw data. If the interface cannot sustain this throughput, the effective frame rate will be reduced, or frames may be buffered temporarily inside the camera.

In many systems, reducing ROI or switching to a lower bit depth not only decreases readout time but also reduces required bandwidth, allowing the interface to sustain higher FPS.

It is therefore important to distinguish between:

● Sensor-limited frame rate, determined by line time and readout

● Interface-limited frame rate, determined by bandwidth and system configuration

Storage speed, driver efficiency, and software overhead can also influence real-world performance, especially during sustained high-speed acquisition.

Understanding where the bottleneck lies—sensor timing or data transfer—is essential when diagnosing frame rate limitations.

Why Your Real Frame Rate Is Lower Than the Specification

The maximum frame rate listed in a camera’s specification sheet is typically calculated under ideal conditions—often using a reduced region of interest (ROI), short exposure time, specific readout mode, and optimal interface configuration. In practice, the achievable frame rate may be lower due to several common factors.

1. Full Sensor vs Reduced ROI

Many maximum FPS values are quoted using a partial ROI. If you operate the camera at full sensor resolution, the increased number of rows directly increases frame read time, reducing achievable frame rate.

2. Exposure Time Exceeds Readout Time

If exposure time is longer than the sensor’s frame read time, it becomes the limiting factor. In low-light imaging, longer integration times naturally reduce maximum FPS, regardless of the sensor’s readout capability.

3. Higher Bit Depth or HDR Modes

Operating in 16-bit or high dynamic range modes increases data volume and may alter readout timing. This can reduce achievable frame rate compared to lower bit-depth “speed” modes.

4. Interface Bandwidth Limitations

USB, Camera Link, or PCIe interfaces have finite bandwidth. If the required data rate exceeds sustained interface throughput, effective FPS may be reduced or buffered internally.

5. Software and Processing Overhead

Trigger configuration, buffering strategy, storage speed, and processing load can all affect sustained frame rate during real-world acquisition.

To diagnose frame rate discrepancies, it is important to identify whether the limitation originates from sensor timing, exposure duration, or data throughput. Only after identifying the bottleneck can performance be optimized effectively.

How to Optimize Frame Rate for Your Application

Optimizing frame rate begins with identifying the true limiting factor in your imaging system. Once the bottleneck is understood, targeted adjustments can significantly improve acquisition speed.

1. Reduce the Region of Interest (ROI)

If full sensor resolution is not required, reducing the number of active rows is often the most effective way to increase frame rate. Since frame read time scales with row count, limiting acquisition to the area of interest can substantially boost FPS.

2. Adjust Exposure Time

When exposure time exceeds readout time, it becomes the limiting factor. Increasing illumination intensity, adjusting gain appropriately, or relaxing signal requirements can allow shorter exposure times and higher achievable frame rates.

3. Select an Appropriate Readout Mode

If available, use a speed-optimized mode when high dynamic range is not critical. Lower bit depth or alternative amplification modes can reduce readout and data transfer burden.

4. Check Interface and Data Throughput

Ensure that the interface bandwidth supports the required data rate. Reducing bit depth, limiting resolution, or upgrading the data link may improve sustained performance.

5. Identify the Dominant Constraint

Frame rate optimization is most effective when changes address the true limiting component—sensor readout, exposure duration, or interface bandwidth—rather than adjusting unrelated parameters.

Conclusion

Camera frame rate is not a fixed specification, but the result of sensor timing, exposure duration, and data throughput working together under specific operating conditions. Understanding the relationship between line time, frame read time, exposure time, and interface bandwidth is essential when evaluating or optimizing acquisition speed. In practice, the achievable frame rate is determined by the slowest component in the imaging chain.

At Tucsen, frame rate performance is engineered and validated within real system constraints—including readout architecture, mode selection, and interface configuration. If your application requires sustained high-speed acquisition, our team can help evaluate the true performance limits within your specific workflow.

Tucsen Photonics Co., Ltd. All rights reserved. When citing, please acknowledge the source: www.tucsen.com

2022/02/25

2022/02/25