Choosing between a monochrome camera and a color camera is a common decision in scientific and industrial imaging. Although both types use similar image sensors, the way they capture light is fundamentally different, which affects sensitivity, spatial resolution, and how color information is produced.

Monochrome cameras record only light intensity, creating grayscale images but capturing more photons at each pixel. Color cameras, in contrast, use filters to separate light into red, green, and blue components, enabling full-color images.

Understanding these differences helps determine which camera type is better suited for a particular imaging application.

How Color Cameras Work: The Bayer Pattern

Monochrome cameras capture only the intensity of light in greyscale, while color cameras can capture color images in the form of Red, Green and Blue (RGB) information at each pixel.

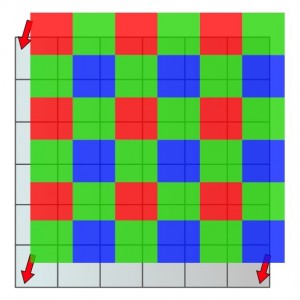

To create a color camera, a grid consisting of red, green and blue filters is placed over a monochrome sensor. This grid is called a Bayer grid. Because of this filter array, each pixel detects only red, green, or blue light.

To form a color image, these RGB intensity values are combined to reconstruct the full-color information. This is the same method computer monitors use to display colors.

The Bayer grid is arranged in a repeating pattern of red, green, and blue filters, with two green pixels for every red or blue pixel. This is because green wavelengths are typically the strongest for many light sources, including sunlight.

For a more detailed explanation of how color scientific cameras operate and where they are commonly used, see our guide on Color Cameras for Scientific Applications: How They Work and Where They Excel.

Why Monochrome Cameras Are More Sensitive?

Monochrome cameras measure the amount of light that hits each pixel, with no information recorded about the wavelength of the captured photons.

Because monochrome sensors do not use color filters, all photons that reach a pixel can be detected. Many modern sCMOS cameras are available in both monochrome and color versions, allowing researchers to choose between higher sensitivity or direct color imaging depending on the application.

In contrast, color cameras rely on the Bayer filter array, which means that each pixel detects only one color channel. For example, pixels that capture red light are unable to capture green photons that land on them.

As a result, some incoming light is effectively lost in color cameras because certain wavelengths are blocked by the filters.

While gaining additional color information can be valuable, monochrome cameras are generally more sensitive and better at resolving fine detail. In many imaging situations, this sensitivity advantage can be significant when compared with color cameras.

Monochrome vs Color Cameras

For applications where sensitivity is important, monochrome cameras offer clear advantages. The filters required for color imaging mean that some photons are lost. For example, pixels that capture red light are unable to capture green photons that land on them. With monochrome cameras, all photons that reach the sensor can be detected.

Because of this difference, monochrome cameras can provide a sensitivity increase of between 2× and 4× compared with color cameras, depending on the wavelength of the photon.

Color filter arrays also affect how image detail is captured. In a typical Bayer pattern, only ¼ of pixels detect red light and ¼ detect blue light, which means the effective resolution for those channels is reduced. Green light is captured by ½ of the pixels, so both sensitivity and resolution are reduced by a factor of two.

Color cameras, however, can produce color images more quickly, simply, and efficiently. Monochrome cameras require additional hardware and multiple images to generate a color image, while color cameras can capture RGB information in a single exposure.

When Should You Use a Monochrome Camera?

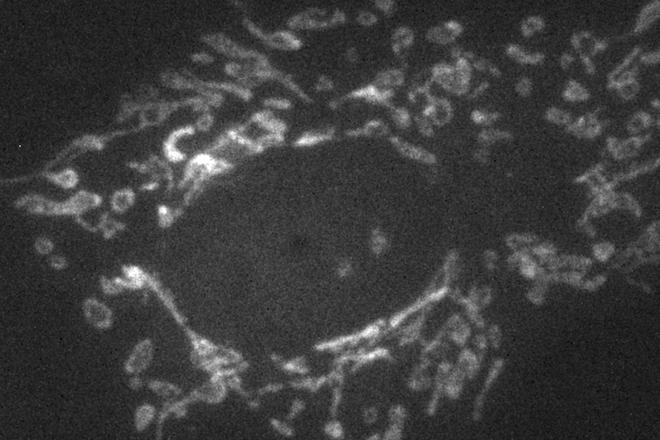

Monochrome cameras are often preferred in imaging applications where maximum sensitivity and fine detail resolution are required. Because every pixel detects the full intensity of incoming light, monochrome sensors can capture weaker signals and subtle structures more effectively than color cameras.

This advantage is particularly important in low-light scientific imaging, where the available signal may already be limited. By detecting all photons that reach the sensor, monochrome cameras provide higher signal levels and improved image quality.

Monochrome cameras are therefore commonly used in applications such as widefield fluorescence microscopy, astronomy imaging, and other light-limited experiments. They are also well-suited for quantitative imaging tasks where accurate intensity measurements are important.

In these situations, the improved sensitivity and spatial detail offered by monochrome sensors often outweigh the need for direct color information.

When Should You Use a Color Camera?

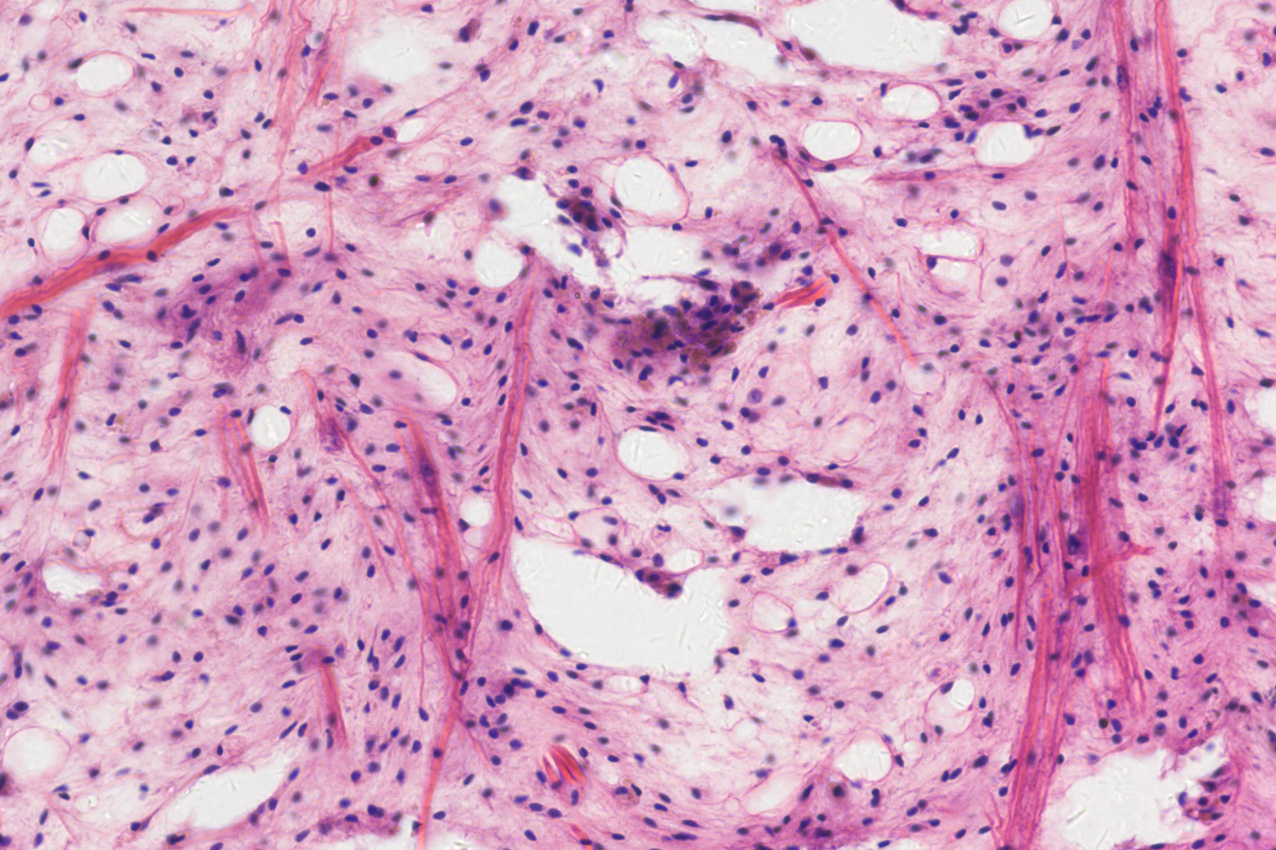

Color cameras are most useful in imaging applications where color information itself is important. Because color sensors capture red, green, and blue information through the Bayer filter, they can generate full-color images in a single exposure.

This allows color cameras to produce color images quickly and efficiently, without the need for additional filters or multiple image acquisitions. In contrast, monochrome systems typically require sequential imaging with different color filters to reconstruct a color image.

Color cameras are therefore commonly used in applications such as brightfield microscopy, pathology imaging, material inspection, and documentation imaging, where color differences carry important information.

In these situations, the ability to capture color directly can simplify the imaging workflow and make interpretation of the image data more intuitive.

Mono vs Color Camera: Which Should You Choose?

Choosing between a monochrome and a color camera ultimately depends on the priorities of your imaging application.

If your system requires maximum sensitivity, higher effective resolution, or precise measurement of light intensity, a monochrome camera is typically the better choice. Because every pixel detects the full amount of incoming light, monochrome sensors perform particularly well in low-light and quantitative imaging environments.

If color information is important, however, a color camera may be more appropriate. Color sensors can capture RGB information in a single exposure, allowing full-color images to be produced quickly and efficiently without additional filters or multiple acquisitions.

Conclusion

Choosing between a monochrome and a color camera is a common decision in scientific camera systems used for microscopy and scientific imaging. Monochrome cameras offer higher sensitivity and better effective resolution because every pixel detects the full intensity of incoming light. Color cameras, on the other hand, allow RGB information to be captured directly, enabling efficient acquisition of full-color images in a single exposure.

In scientific imaging systems, the decision often comes down to whether maximum sensitivity and quantitative accuracy or direct color information is more important for the task.

If you are evaluating camera options for your imaging system, Tucsen offers a range of scientific monochrome and color cameras designed for microscopy, life science, and industrial imaging applications.Our team can help identify the most suitable sensor technology for your specific requirements.

FAQs

Do you need a color camera for scientific imaging?

If low-light imaging is important, a monochrome camera is usually the better choice because it detects more incoming photons and provides higher sensitivity. If color information is essential, a color camera may be preferred since it can capture RGB information directly in a single exposure.

Can a monochrome camera produce color images?

Yes. A monochrome camera can produce color images by capturing multiple images through red, green, and blue filters and combining them. This approach can provide accurate color information but requires additional hardware and multiple exposures.

Why are monochrome cameras more sensitive?

Monochrome cameras are more sensitive because they do not use a color filter array. Every pixel detects the full intensity of incoming light, while color cameras block certain wavelengths through the Bayer filter, reducing the number of photons reaching each pixel.

Are monochrome cameras better for microscopy?

Monochrome cameras are often preferred in microscopy because of their higher sensitivity and better effective resolution, which are important for detecting weak signals. However, color cameras can still be useful in applications where color information helps interpret the sample.

Is a monochrome camera always better than a color camera?

Not always. Monochrome cameras offer higher sensitivity and better effective resolution because every pixel detects the full intensity of incoming light. However, color cameras are better when color information is essential, since they can capture RGB data directly in a single exposure without additional filters or multiple images.

Tucsen Photonics Co., Ltd. All rights reserved. When citing, please acknowledge the source: www.tucsen.com

2022/02/25

2022/02/25